Caching

Octoparts caches the following information in Memcached:

- Responses from API calls

- The configuration data that is stored in the DB

Memcached configuration

Octoparts uses Memcached in a non-blocking and highly parallel way, and does so using Spymemcached, which optimises multiaccesses for the same keys into a single multi-get.

Since Spymemcached caps the number of multi-get items due to optimisation to 4096, it might be a good idea to use this number as the -R parameter you pass to your Memcached daemon, which is the option that sets the maximum amount of requests a server processes from a single connection without yielding. To find the optimum -R would require extensive testing and benchmarking in your particular environment.

Versioning

In order to provide powerful support for cache invalidation, Octoparts maintains version information at the level of

- individual API endpoints (e.g. the

getUserProfileendpoint) - particular values of parameters for a given endpoint (e.g. ‘get me the user profile where userId = 123’)

This allows us to invalidate cached data at two different scopes.

By increasing the version of the

getUserProfilepart, any API responses cached with the previous version will be invalidated.By increasing the version of the

(partId = getUserProfile, userId = 123)tuple, we can invalidate any cached versions of that endpoint’s response for that particular user.

The version information is also stored in Memcached so that it can be shared across all Octoparts instances, in order to improve the cache hit rate. It is also cached in-memory on each instance for performance reasons.

Cache groups

Sometimes, when one piece of data changes, you want to invalidate a number of cached API responses in one go. For example, if a user of your site gains an activity point for performing some action, you may want to invalidate all data that was generated based on the user’s out-of-date activity point count.

Octoparts provides support for this with the concept of cache groups. Every API endpoint or parameter can be a member of one or more cache groups. One call to the Octoparts cache invalidation API will flush the cache for all members of the given group.

For example, say we have three API endpoints that take userId parameters:

getUserProfile(userId)getUserActivityPointCount(userId)getUserPremiumStatus(userId)

Assume that each of these userId parameters has been registered with the userActivityPoints cache group.

When user 123’s activity point count changes, just make one request to

/cache/invalidate/cacheGroup/userActivityPoints/params/123

Cached data from all three APIs relating to that user will be invalidated.

Cache headers

Octoparts supports a number of cache-related HTTP headers when communicating both with clients (i.e. frontends) and with backend APIS.

Clients

Cache-Control: no-cache

If the client sets this header when performing an aggregate request, Octoparts will guarantee not to return any API responses that were retrieved from the cache.

Backend APIs

Cache-Control: no-store

If this header is set on an API response, Octoparts will guarantee not to insert the response into the cache. Note, however, that if the response already happens to be present in the cache, Octoparts will not remove it.

Cache-Control: max-age=3600

If max-age is set, Octoparts will cache the response with the given TTL (in seconds). However, if a TTL is also set in the admin screen for that endpoint, the shorter of the two TTLs will be used.

Note that if the response can be revalidated (i.e. it has an ETag or Last-Modified header), then the max-age value will NOT be used to decide the cache TTL. The TTL value set in the admin screen (which may be eternal) will be used unconditionally.

ETag,Last-Modified

If one or more of these headers are set, Octoparts will store their values together with the response itself in the cache.

The next time Octoparts looks up the response in the cache, it will contact the backend API again in order to validate that the cached response is not stale. It will send If-None-Match and/or If-Modified-Since headers. If the backend responds with 304 Not Modified, Octoparts will return the cached response to the client. Otherwise it will cache the new response and return it to the client.

Note that if the the response also included a max-age directive, revalidation will not occur until after this period has elapsed. For example, if the backend response had both an ETag and a max-age of 60 seconds, Octoparts will treat the response as fresh for 60 seconds. During the first 60 seconds it will not bother to revalidate the response, but after 60 seconds have elapsed it will start revalidating using the ETag value.

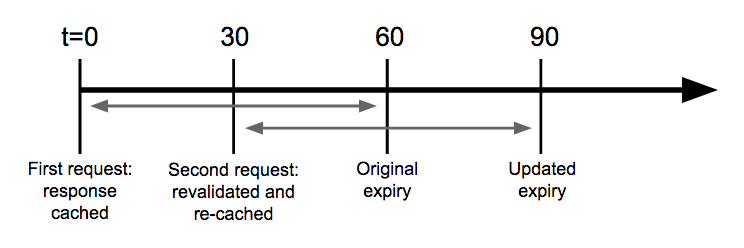

Revalidation and TTL reset

If Octoparts revalidates a cached response using If-None-Match and/or If-Modified-Since headers and the backend responds with a 304 Not Modified, Octoparts will return the cached response to the client and then re-insert that cached response into the cache, thereby extending its TTL.

Note that this means a value can stay in the cache indefinitely, as long as it gets requested and revalidated often enough.

For example, say you have registered an endpoint and set the TTL to 60 seconds in the admin screen. At a given point in time you make a request, and the response comes back with an ETag. This response is inserted into the cache.

30 seconds later you request the same endpoint with the same parameters. The response is retrieved from the cache, and revalidated using the ETag value. The backend responds with a 304 Not Modified, so the response is returned to the client. Its cache TTL is then reset to 60 seconds, so it will not expire until 90 seconds after the original request.

However, the following exception should be noted: if the response has a max-age directive, then ETag revalidation will not begin until after the max-age has expired. In the above example, if there was also a max-age of more than 60 seconds then ETag revalidation would not occur, so the TTL would never be extended.

Summary

The interaction between the configured TTL and various cache control headers can get pretty confusing, so here’s a summary of how Octoparts behaves in various situations.

Key:

- Configured TTL = the endpoint’s cache TTL value configured using the admin UI

max-age= the value of theCache-Control: max-ageheader set on the backend responseETag/Last-Modified= whether one of these headers is present in the backend response- Memcached TTL = the actual TTL value used when inserting the response into Memcached

- Revalidates = whether (and when) Octoparts revalidates a cached response against the backend

| Configured TTL | max-age |

ETag/Last-Modified |

Memcached TTL | Revalidates | Notes |

|---|---|---|---|---|---|

| 0 | - | - | Will not cache | n/a | Setting the cache TTL to 0 disables caching completely |

| None | None | Not present | 30 days | No | 30 days = infinity, because we never learned to count higher than 30 |

| a | None | Not present | a | No | |

| None | b | Not present | b | No | |

| a | b | Not present | min(a, b) | No | |

| None | None | Present | None | Always | |

| a | None | Present | a | Always | TTL can be reset. See discussion above. |

| None | b | Present | None | After b | |

| a | b | Present | a | After b | TTL can be reset. See discussion above. |

Internals

This flowchart shows the gory details of how Octoparts looks up an API response in the cache.

Next: Read about Timeouts